These times, digital-fact professionals seem back again on the system as the very first interactive augmented-actuality method that enabled customers to engage at the same time with actual and virtual objects in a solitary immersive reality.

The challenge began in 1991, when I pitched the effort and hard work as section of my doctoral investigation at Stanford University. By the time I finished—three years and several prototypes later—the process I experienced assembled stuffed 50 % a home and utilised just about a million dollars’ value of components. And I had collected sufficient data from human screening to definitively show that augmenting a actual workspace with digital objects could considerably enhance person functionality in precision duties.

Offered the limited time frame, it may sound like all went easily, but the project came near to finding derailed quite a few situations, many thanks to a limited budget and significant equipment needs. In point, the exertion could have crashed early on, had a parachute—a true one, not a digital one—not unsuccessful to open up in the apparent blue skies around Dayton, Ohio, all through the summer season of 1992.

Prior to I explain how a parachute incident aided generate the improvement of augmented actuality, I’ll lay out a small of the historical context.

30 several years ago, the discipline of virtual truth was in its infancy, the phrase alone acquiring only been coined in 1987 by

Jaron Lanier, who was commercializing some of the very first headsets and gloves. His function designed on before investigation by Ivan Sutherland, who pioneered head-mounted show technological know-how and head-tracking, two essential things that sparked the VR discipline. Augmented reality (AR)—that is, combining the genuine globe and the digital environment into a single immersive and interactive reality—did not but exist in a significant way.

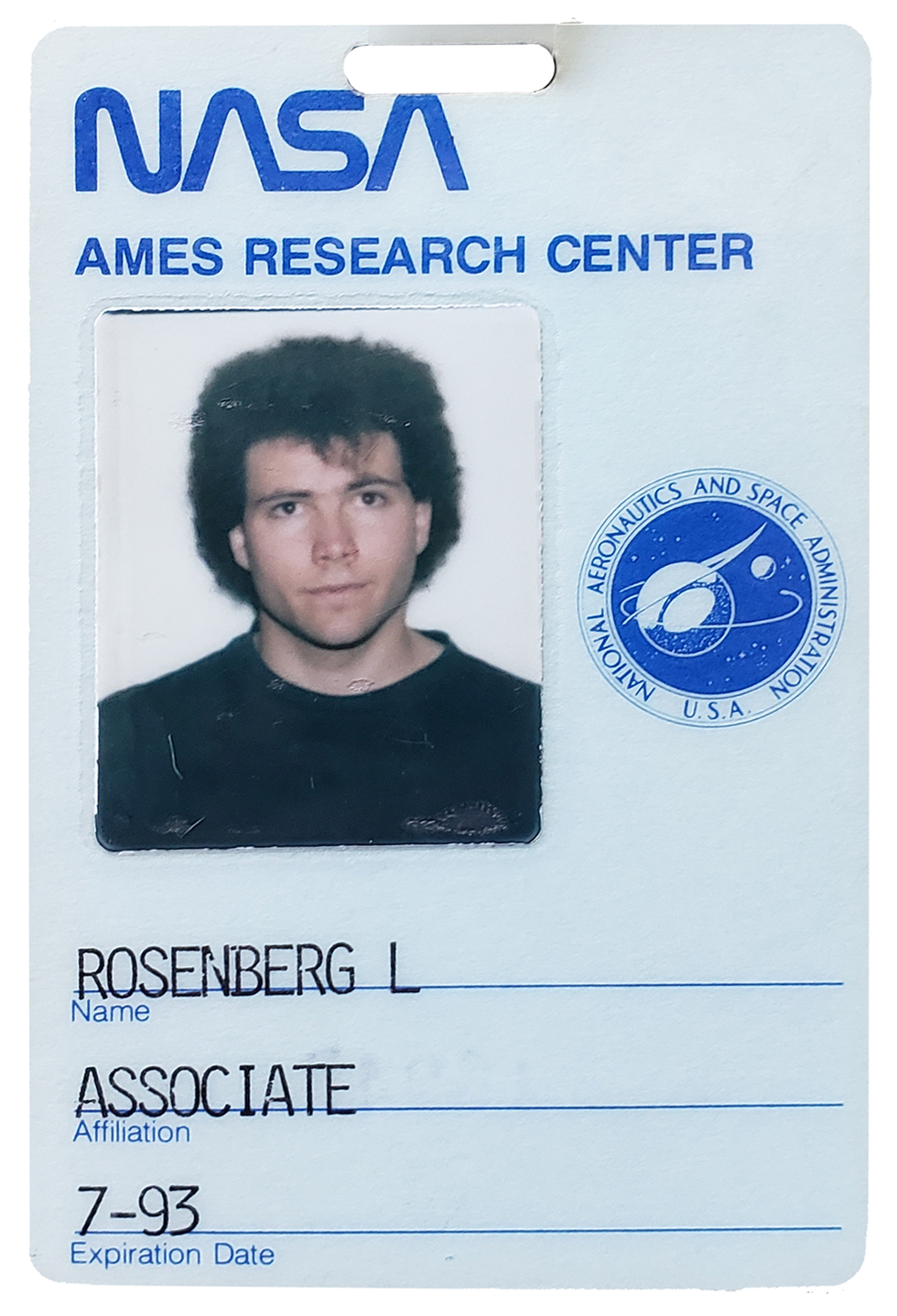

Again then, I was a graduate university student at Stanford College and a element-time researcher at

NASA’s Ames Analysis Center, intrigued in the development of digital worlds. At Stanford, I labored in the Heart for Style and design Exploration, a group concentrated on the intersection of human beings and technologies that designed some of the really early VR gloves, immersive vision techniques, and 3D audio methods. At NASA, I worked in the Sophisticated Displays and Spatial Perception Laboratory of the Ames Study Center, where scientists ended up discovering the essential parameters expected to enable realistic and immersive simulated worlds.

Of program, figuring out how to create a excellent VR expertise and currently being ready to create it are not the exact factor. The very best PCs on the market place back again then applied Intel 486 processors working at 33 megahertz. Altered for inflation, they charge about US $8,000 and weren’t even a thousandth as fast as a cheap gaming laptop or computer right now. The other choice was to invest $60,000 in a

Silicon Graphics workstation—still much less than a hundredth as fast as a mediocre Computer system now. So, however researchers working in VR through the late 80s and early 90s ended up accomplishing groundbreaking operate, the crude graphics, bulky headsets, and lag so undesirable it created individuals dizzy or nauseous plagued the ensuing virtual encounters.

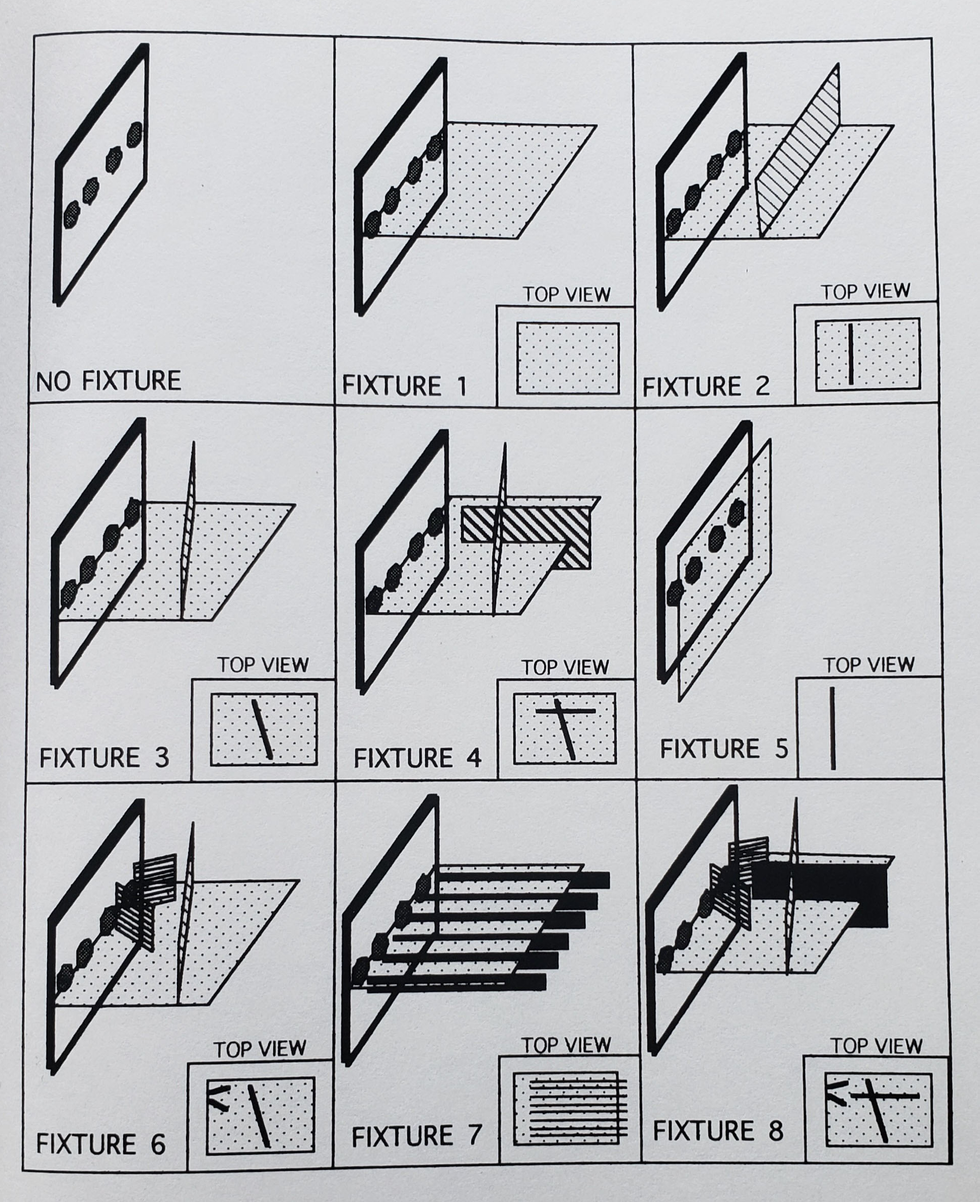

These early drawings of a true pegboard put together with digital overlays generated by a computer—an early edition of augmented reality—were established by Louis Rosenberg as element of his Digital Fixtures undertaking.Louis Rosenberg

I was conducting a investigation venture at NASA to

optimize depth perception in early 3D-eyesight devices, and I was one particular of these people today having dizzy from the lag. And I found that the pictures established back then had been unquestionably digital but considerably from actuality.

Nevertheless, I was not discouraged by the dizziness or the small fidelity, due to the fact I was sure the hardware would steadily increase. As an alternative, I was concerned about how enclosed and isolated the VR practical experience produced me come to feel. I wished I could develop the technologies, having the electric power of VR and unleashing it into the true world. I dreamed of building a merged truth where by digital objects inhabited your bodily environment in such an authentic method that they appeared like genuine parts of the planet around you, enabling you to access out and interact as if they were being essentially there.

I was informed of one particular extremely simple form of merged reality—the head-up display— in use by armed forces pilots, enabling flight knowledge to surface in their strains of sight so they didn’t have to appear down at cockpit gauges. I hadn’t experienced these types of a exhibit myself, but turned common with them many thanks to a couple blockbuster 1980s strike movies, like

Top Gun and Terminator. In Top rated Gun a glowing crosshair appeared on a glass panel in entrance of the pilot all through dogfights in Terminator, crosshairs joined textual content and numerical information as section of the fictional cyborg’s check out of the environment all-around it.

Neither of these merged realities have been the slightest bit immersive, presenting images on a flat airplane instead than connected to the genuine globe in 3D house. But they hinted at exciting choices. I thought I could go much beyond basic crosshairs and text on a flat aircraft to build virtual objects that could be spatially registered to serious objects in an standard environment. And I hoped to instill these virtual objects with sensible bodily homes.

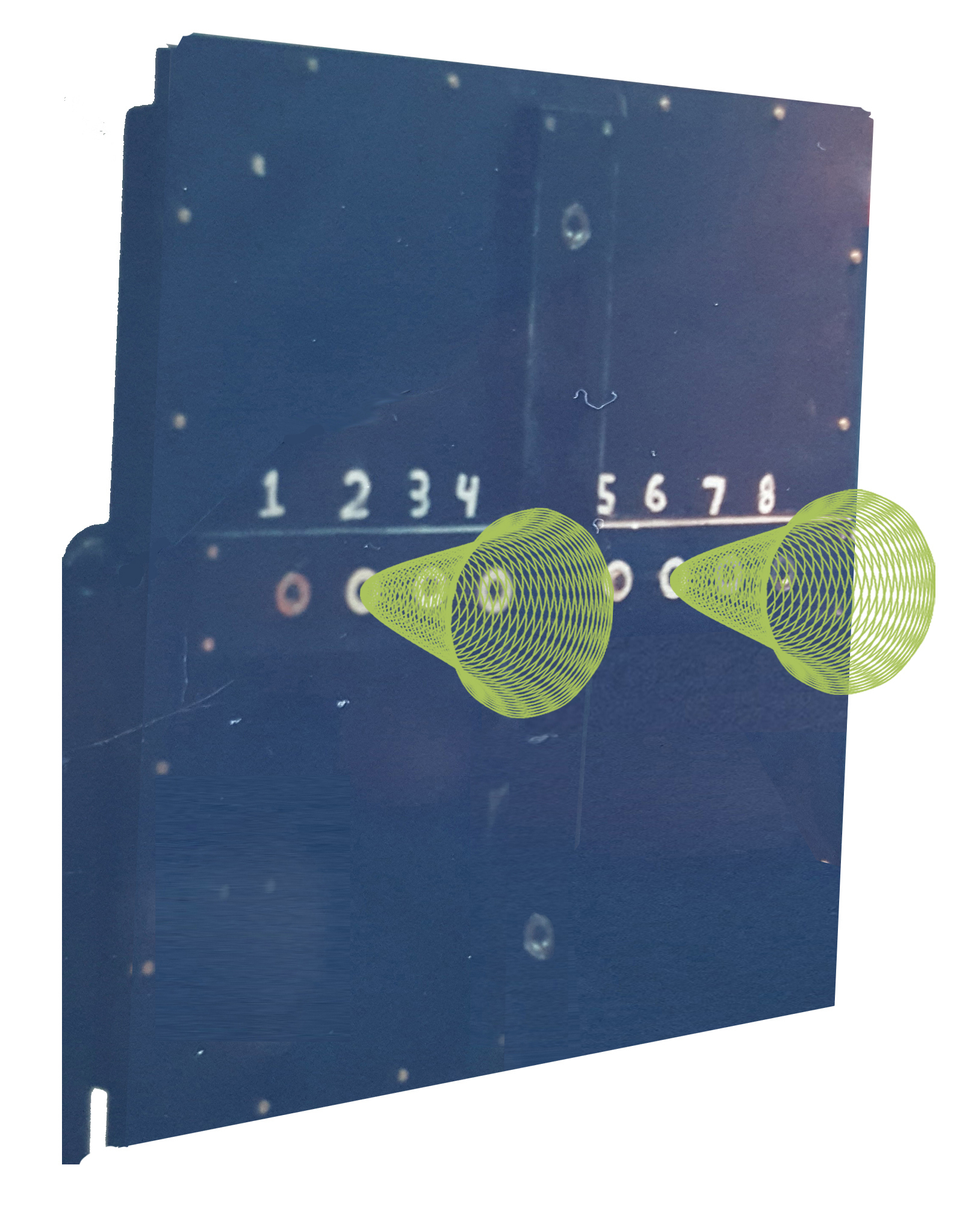

The Fitts’s Regulation peg-insertion undertaking includes owning check subjects speedily transfer metal pegs concerning holes. The board demonstrated listed here was true, the cones that helped information the consumer to the appropriate holes digital.Louis Rosenberg

I essential substantial resources—beyond what I experienced entry to at Stanford and NASA—to pursue this eyesight. So I pitched the concept to the Human Sensory Opinions Group of the U.S. Air Force’s Armstrong Laboratory, now section of the

Air Pressure Study Laboratory.

To demonstrate the simple value of merging serious and digital worlds, I utilised the analogy of a easy metal ruler. If you want to attract a straight line in the true planet, you can do it freehand, likely gradual and utilizing important psychological effort, and it still will not be particularly straight. Or you can get a ruler and do it much quicker with significantly significantly less psychological energy. Now picture that alternatively of a genuine ruler, you could grab a digital ruler and make it instantly look in the real globe, correctly registered to your true surroundings. And picture that this virtual ruler feels physically authentic—so substantially so that you can use it to guide your serious pencil. For the reason that it’s virtual, it can be any form and dimension, with exciting and practical properties that you could in no way reach with a metallic straightedge.

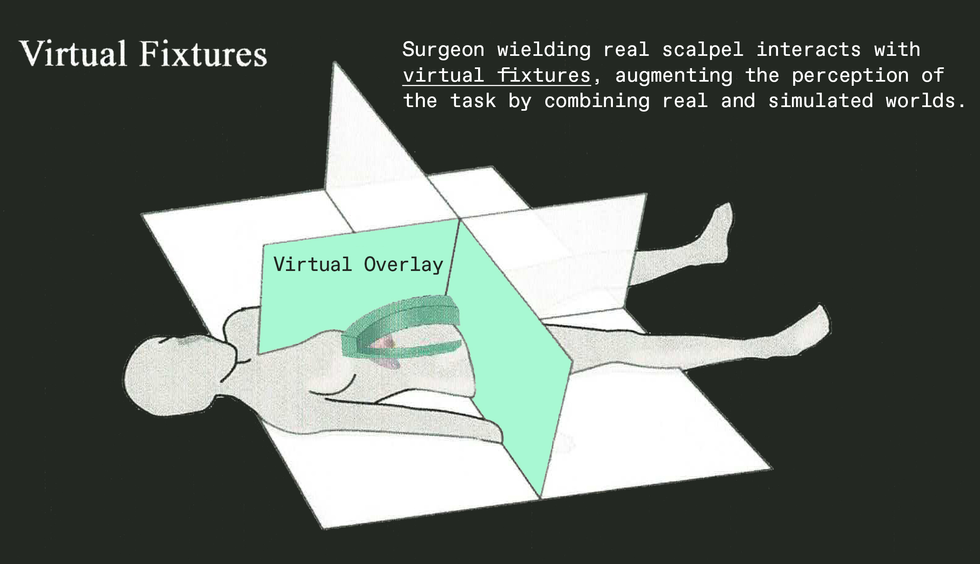

Of class, the ruler was just an analogy. The applications I pitched to the Air Power ranged from augmented manufacturing to medical procedures. For instance, take into consideration a surgeon who requirements to make a harmful incision. She could use a cumbersome metallic fixture to continual her hand and stay clear of critical organs. Or we could invent a thing new to increase the surgery—a digital fixture to manual her actual scalpel, not just visually but physically. Due to the fact it’s virtual, this kind of a fixture would go correct by means of the patient’s overall body, sinking into tissue ahead of a one lower had been built. That was the notion that acquired the military psyched, and their fascination wasn’t just for in-particular person tasks like surgery but for distant responsibilities performed applying remotely controlled robots. For case in point, a technician on Earth could mend a satellite by managing a robot remotely, assisted by virtual fixtures extra to movie photos of the serious worksite. The Air Drive agreed to provide plenty of funding to address my fees at Stanford along with a smaller funds for devices. Maybe additional appreciably, I also obtained entry to personal computers and other machines at

Wright-Patterson Air Power Foundation close to Dayton, Ohio.

And what grew to become regarded as the Virtual Fixtures Project came to life, doing work toward creating a prototype that could be rigorously analyzed with human topics. And I turned a roving researcher, building core tips at Stanford, fleshing out some of the fundamental systems at NASA Ames, and assembling the complete procedure at Wright-Patterson.

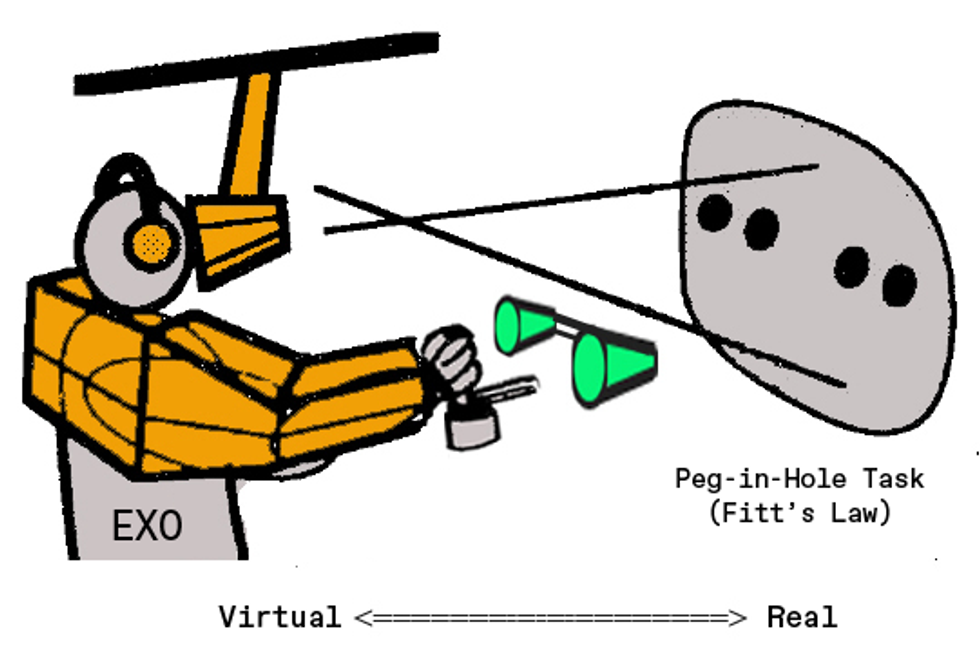

In this sketch of his augmented-truth procedure, Louis Rosenberg displays a consumer of the Digital Fixtures platform wearing a partial exoskeleton and peering at a actual pegboard augmented with cone-shaped virtual fixtures.Louis Rosenberg

Now about those parachutes.

As a younger researcher in my early twenties, I was keen to master about the several jobs going on all around me at these different laboratories. One particular exertion I adopted intently at Wright-Patterson was a project building new parachutes. As you may possibly count on, when the investigate crew came up with a new style, they did not just strap a person in and test it. Alternatively, they connected the parachutes to dummy rigs equipped with sensors and instrumentation. Two engineers would go up in an airplane with the hardware, dropping rigs and jumping along with so they could notice how the chutes unfolded. Adhere with my tale and you are going to see how this turned essential to the advancement of that early AR procedure.

Back at the Virtual Fixtures exertion, I aimed to prove the basic concept—that a genuine workspace could be augmented with virtual objects that truly feel so serious, they could help buyers as they carried out dexterous manual duties. To test the strategy, I wasn’t going to have people accomplish surgery or maintenance satellites. Alternatively, I wanted a simple repeatable undertaking to quantify guide general performance. The Air Drive currently had a standardized activity it had used for yrs to examination human dexterity beneath a wide range of mental and physical stresses. It is identified as the

Fitts’s Legislation peg-insertion job, and it entails obtaining take a look at subjects quickly transfer metal pegs involving holes on a huge pegboard.

So I commenced assembling a program that would enable virtual fixtures to be merged with a real pegboard, developing a mixed-truth practical experience properly registered in 3D area. I aimed to make these virtual objects really feel so true that bumping the actual peg into a virtual fixture would experience as authentic as bumping into the real board.

I wrote application to simulate a extensive array of digital fixtures, from straightforward surfaces that prevented your hand from overshooting a focus on gap, to carefully shaped cones that could enable a user information the actual peg into the true hole. I made digital overlays that simulated textures and experienced corresponding sounds, even overlays that simulated pushing by way of a thick liquid as it it have been virtual honey.

One imagined use for augmented truth at the time of its development was in surgical treatment. Now, augmented truth is utilized for surgical instruction, and surgeons are starting to use it in the operating place.Louis Rosenberg

For additional realism, I modeled the physics of every single virtual element, registering its locale accurately in 3 proportions so it lined up with the user’s perception of the actual wood board. Then, when the person moved a hand into an space corresponding to a digital floor, motors in the exoskeleton would physically drive again, an interface technological innovation now typically identified as “haptics.” It without a doubt felt so genuine that you could slide along the edge of a digital surface area the way you may well shift a pencil versus a serious ruler.

To accurately align these virtual factors with the authentic pegboard, I required superior-top quality video clip cameras. Movie cameras at the time had been far much more high priced than they are today, and I experienced no revenue still left in my spending budget to buy them. This was a frustrating barrier: The Air Power had specified me access to a extensive array of astounding components, but when it arrived to easy cameras, they could not assist. It seemed like every exploration task essential them, most of much bigger precedence than mine.

Which delivers me back again to the skydiving engineers testing experimental parachutes. These engineers arrived into the lab a person working day to chat they pointed out that their chute experienced failed to open, their dummy rig plummeting to the floor and destroying all the sensors and cameras aboard.

This seemed like it would be a setback for my venture as very well, because I understood if there have been any further cameras in the building, the engineers would get them.

But then I questioned if I could acquire a glance at the wreckage from their unsuccessful take a look at. It was a mangled mess of bent steel, dangling circuits, and smashed cameras. However, even though the cameras seemed terrible with cracked cases and damaged lenses, I questioned if I could get any of them to get the job done effectively sufficient for my needs.

By some miracle, I was able to piece together two performing units from the 6 that had plummeted to the floor. And so, the initially human tests of an interactive augmented-truth system was manufactured feasible by cameras that experienced literally fallen out of the sky and smashed into the earth.

To recognize how vital these cameras ended up to the method, feel of a basic AR software right now, like

Pokémon Go. If you did not have a digital camera on the again of your cell phone to capture and display screen the actual environment in serious time, it would not be an augmented-actuality knowledge it would just be a regular video clip recreation.

The identical was accurate for the Virtual Fixtures system. But many thanks to the cameras from that unsuccessful parachute rig, I was ready to develop a mixed reality with precise spatial registration, offering an immersive expertise in which you could arrive at out and interact with the authentic and virtual environments concurrently.

As for the experimental element of the task, I conducted a series of human reports in which customers experienced a variety of digital fixtures overlaid onto their perception of the real undertaking board. The most valuable fixtures turned out to be cones and surfaces that could manual the user’s hand as they aimed the peg toward a hole. The most effective involved actual physical ordeals that couldn’t be simply created in the real entire world but were conveniently achievable virtually. For instance, I coded virtual surfaces that had been “magnetically attractive” to the peg. For the end users, it felt as if the peg had snapped to the surface area. Then they could glide alongside it right until they chose to yank no cost with another snap. This sort of fixtures enhanced pace and dexterity in the trials by more than 100 per cent.

Of the many applications for Digital Fixtures that we viewed as at the time, the most commercially practical back then associated manually controlling robots in remote or risky environments—for case in point, in the course of hazardous waste thoroughly clean-up. If the communications length introduced a time hold off in the telerobotic management, virtual fixtures

grew to become even additional precious for improving human dexterity.

Right now, researchers are even now checking out the use of virtual fixtures for telerobotic programs with great good results, which include for use in

satellite restore and robotic-assisted surgical procedures.

Louis Rosenberg put in some of his time working in the Innovative Shows and Spatial Notion Laboratory of the Ames Exploration Centre as element of his investigate in augmented actuality.Louis Rosenberg

I went in a diverse route, pushing for extra mainstream applications for augmented actuality. That’s because the component of the Virtual Fixtures undertaking that had the greatest effect on me personally was not the improved performance in the peg-insertion endeavor. Rather, it was the big smiles that lit up the faces of the human subjects when they climbed out of the method and effused about what a impressive working experience they experienced had. Lots of informed me, with no prompting, that this type of technological innovation would a person working day be everywhere you go.

And without a doubt, I agreed with them. I was persuaded we’d see this type of immersive know-how go mainstream by the close of the 1990s. In actuality, I was so motivated by the enthusiastic reactions men and women had when they tried out those people early prototypes, I founded a firm in 1993—Immersion—with the goal of pursuing mainstream consumer applications. Of course, it has not occurred almost that quickly.

At the hazard of staying incorrect yet again, I sincerely consider that virtual and augmented fact, now generally referred to as the metaverse, will become an significant portion of most people’s life by the close of the 2020s. In point, based on the current surge of investment by significant firms into improving upon the technology, I forecast that by the early 2030s augmented truth will exchange the mobile cell phone as our major interface to digital articles.

And no, none of the examination subjects who experienced that early glimpse of augmented truth 30 decades back knew they were being utilizing components that experienced fallen out of an plane. But they did know that they were amid the 1st to get to out and contact our augmented long run.

From Your Internet site Article content

Similar Content All over the Web

More Stories

How Mobile Application Development Can Benefit The Rural Customer?

How to Complete a Job Application Form

Laravel Application Development: 5 Hacks To Get It Done